Field entry, 7 May.

Once Deep Research started to feel real, the obvious next question became uncomfortable: what if twenty minutes is the toy version?

Not useless. Twenty minutes is already enough to make an agent search wider, read with more discipline, build a dossier, and produce something closer to a report than an answer. But there is a class of question that does not want a better search loop. It wants a researcher.

The difference is not merely duration, though duration is where the trouble announces itself. A twenty-minute research run can cover a landscape. A twenty-four-hour run has to live there. It needs memory, rhythm, budget, checkpoints, verification, sleep, recovery, and enough self-suspicion not to spend hour sixteen polishing a theory that hour six already quietly killed.

That was the long-running research design: take the concept from Deep Research and extend it into an agent that can spend a day, or several days, working on a hard problem statement. Not just searching the web. Crunching data. Reasoning. Writing tests. Running simulations. Exploring prior art. Building small artifacts. Falsifying its own ideas. Producing either a comprehensive paper or a working code result when the problem has an executable shape.

This sounds grand until the first design decision, which is almost disappointingly practical: the core reasoning loop should be single-threaded.

That does not mean alone. It means one spine owns the work. Search agents can gather evidence. Critics can attack claims. A smart friend can be called in for a bounded second opinion. Parallel samples can compete on a proof step or kernel implementation when there is a sharp verifier. But the main thread, the one making the decisions and updating the theory of the problem, stays coherent.

The reason is simple and slightly annoying: shared writes carry hidden decisions. Two agents editing the same theory, codebase, or paper at once do not merely divide labor. They divide assumptions. Then the merge step becomes a séance in which everyone pretends the contradictions are formatting issues.

For broad discovery, parallelism is excellent. For coherent invention, it needs a leash.

The second design decision is the one I trust most: the sandbox is the truth oracle whenever a machine check exists.

Agents can reason beautifully about a thing that a compiler would reject in half a second. They can defend an optimization that a benchmark would embarrass before lunch. They can talk themselves into a proof-shaped object that Lean would not let through the door. The long-running agent therefore has to prefer the cheapest decisive check over more inner monologue: compile, unit test, property test, simulator, performance counter, formal verifier, critic, web corroboration, in that order.

This is where the work stops being “research” in the soft browser-tab sense and becomes something closer to a lab bench. If the claim is empirical, run the smallest experiment that could falsify it. If the claim is a program invariant, test it or prove it. If the claim is conceptual and no machine oracle exists, use a clean-context critic and force the main agent to answer the attack before moving on.

The clean-context part matters. A critic who has read the whole charming story of how we got here is already emotionally compromised. Give the critic the claim, the supporting evidence, and none of the journey. Make it mean.

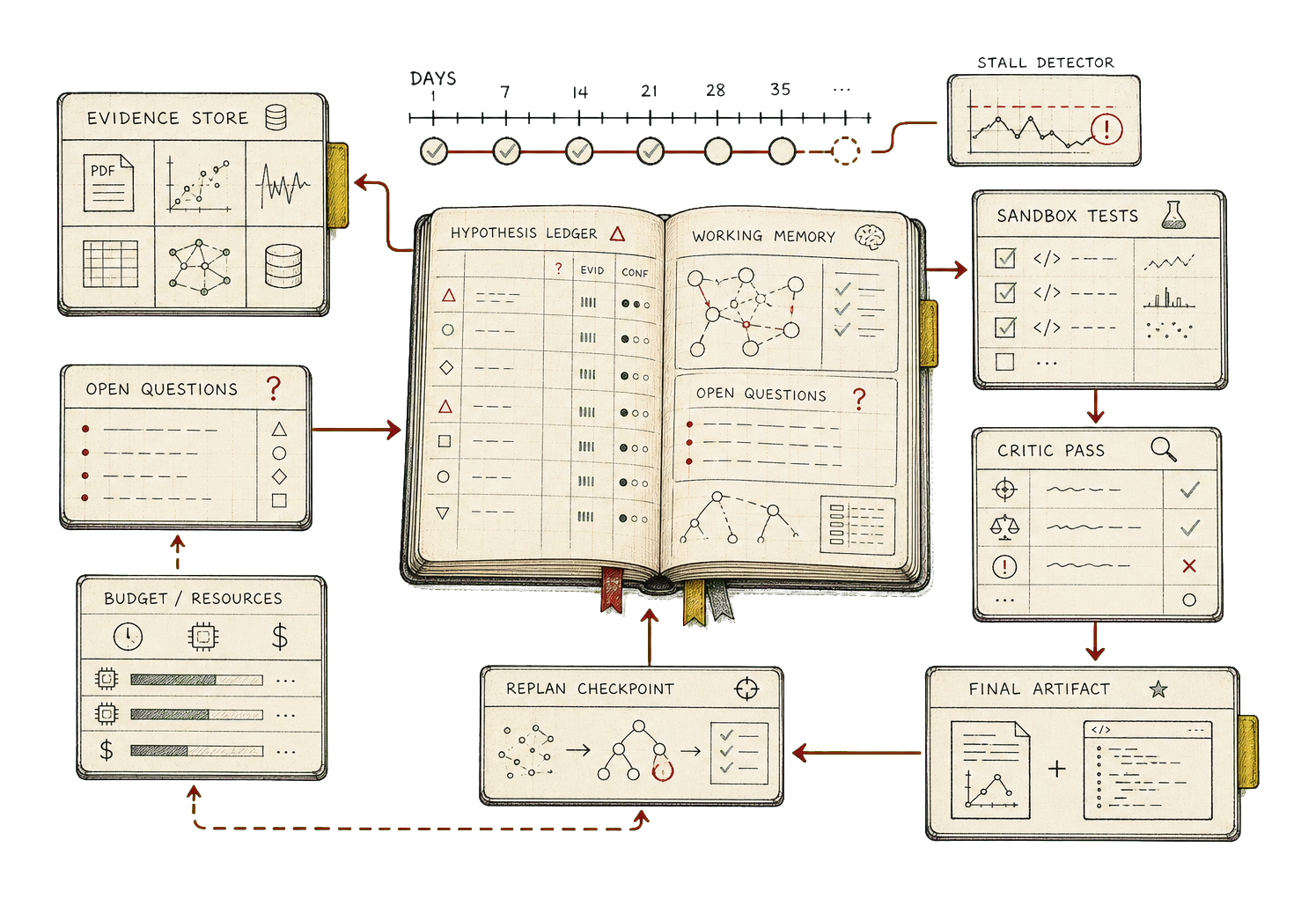

The load-bearing artifact in the design is not the transcript. It is the hypothesis ledger.

Every loop has to do something legible: validate a hypothesis, falsify one, resolve a contradiction, close a proof step, shrink the open question set, or reach a planned checkpoint. If none of those things happens for several loops, the agent is not “thinking deeply.” It is spinning, and spinning with a large context window is still spinning, just with better upholstery.

That is why the long-running design includes explicit anti-runaway machinery: per-loop, per-phase, and per-session caps; a burn-rate monitor; a stall detector; scheduled replans; forced checkpoints; and termination criteria that distinguish success, sufficient progress, budget exhaustion, and irrecoverable confusion. This sounds bureaucratic until one remembers the alternative, which is a very expensive paragraph that says “after extensive exploration” with the haunted confidence of a system that has forgotten what question it was answering.

Long-running work also needs a different relationship with context. Even enormous context windows rot. The agent needs a working window, a durable filesystem, structured ledgers, archived observations, phase summaries, and a resumption ritual. The filesystem becomes memory not because files are fashionable, but because they are inspectable, restorable, diffable, and wonderfully indifferent to the model’s mood.

The workspace shape is plain on purpose: progress log, plan, phase summaries, hypotheses, evidence, contradictions, open questions, observations, code, experiments, proofs, documents, budget. The vector store may help find things, but it does not become the source of truth. Primary state belongs in artifacts one can open and judge.

This is the part of long-running agents that feels least magical and most important. If a session can run for thirty-six hours, it must be able to restart without pretending reincarnation is memory. Read the progress log. Read the current plan. Read the ledger tail. Read the open contradictions. Read the git history. Rehydrate deliberately. Name the next concrete action. Notice if anything looks broken.

The agent does not need to remember everything in the human sense. It needs to preserve the right things in the right forms.

There are two terminal shapes in the design. In paper mode, the agent writes a cited dossier with findings, contradictions, gaps, and references tied back to the evidence ledger. It must surface negative results instead of burying the approaches that failed. In code mode, it finalizes a repository: tests passing, replication instructions, benchmarks where relevant, architecture notes, changelog, release tag, and the experimental debris moved somewhere honest.

The shared core matters because the distinction between “paper” and “code” arrives late. Both require the same discipline before the terminal phase: frame the problem, plan the route, explore, hypothesize, verify, reflect, replan, and carry forward only what survived contact with evidence.

There is a lovely ambition hiding inside this rather practical machinery. The aim is not only to answer big questions more thoroughly. It is to let an agent produce new work: a design nobody had yet assembled, a proof route, a performance trick, a plausible invention with the boring receipts attached. Novelty without verification is just style. Verification without imagination is a test harness staring at a wall. The researcher agent needs both.

I keep returning to the example that motivated the design: spending more than a day on a genuinely hard technical problem, such as finding a novel path for LLM inference on an ASIC and helping program it. That is not a search task. It is not even a writing task. It is a sustained investigation with instruments.

The agent has to read prior work, form hypotheses about mappings and kernels, write small experiments, run simulators, inspect failures, update the ledger, call a critic, abandon pretty dead ends, and eventually produce something that can be checked by a machine or inspected by a person who knows what failure smells like.

At that point the product question changes. It is no longer “can the agent answer this?” It is “can the agent keep working without losing the thread, lying to itself about progress, or spending the user’s money on motion?”

That is why the real clock matters.

A short research mode can be judged by the quality of its final report. A long-running researcher has to be judged by its habits over time: how it stores state, how it measures progress, how it recovers, how it tests, how it asks for help, how it knows when to stop. The answer at the end is still the artifact, but the artifact is only trustworthy if the work had a disciplined way to survive the night.

Field note

The leap from Deep Research to long-running research is not a bigger budget button. It is a change in species: from a wide reader that writes a grounded memo to a durable researcher that can hold a theory, test it, revise it, and leave behind enough evidence that the next morning does not have to start by guessing what yesterday meant.