Field entry, 6 May.

It started, as these things often do, with something that should have been boring: make the agent do useful work, show what it did, and avoid lying about the parts in between.

I went back through three private case studies this week, reading them less like codebases and more like travel journals. The first began in February as a local all-in-one workbench. The second arrived in April as an edge-native translation of the same instincts. The third became a live, durable kernel, with events, projections, context ledgers, work items, approval gates, and a much lower tolerance for theatre.

The sequence is not a heroic progression from naive to correct. That would be too tidy, and also a little rude to the earlier versions, which did the difficult job of making the later mistakes visible. The more honest version is that each generation answered the question it could see, then left behind enough scar tissue to make the next question sharper.

The first workbench

The first version solved the problem by putting everything close together. Chat surface, tool runtime, local database, terminal sessions, automations, skills, channel adapters, model aliases, code-server sessions, and swarm experiments all lived in one application. It was not elegant, exactly, but it had the honest brutality of a workbench: if something broke, the pieces were close enough together that you could usually hear the crack.

The commit history has the feeling of a packed suitcase. The initial import on 17 February was already broad rather than cautious. Four days later a large platform expansion landed with skills, prompt documents, services, UI, and infrastructure. The same day then produced the more revealing fixes: pass the output-token cap correctly, stop auto-continuing forever, reduce chat heading sizes, remove personality presets.

Those are small changes only if you have never built one of these systems. They are exactly where the lesson lives. The agent was not failing because it lacked enough agency. It was failing because agency without budget, transcript discipline, tool boundaries, and output recovery is just an expensive way to make uncertainty scroll.

The first lesson was that an agent is not a prompt. It is a runtime with state, permissions, tools, recovery, and a user-visible account of what happened. The prompt matters, obviously, but it is the visible surface of a much larger system. Underneath it is the unglamorous machinery: schemas, approval policy, path checks, result truncation, command lifetimes, session ids, logs, persistence, and the question of whether a tool result can be traced back to the tool call that produced it.

This is where my taste began to harden. I became much less interested in agent autonomy as a vibe and much more interested in agent boundaries as a product feature. A tool should know whether it is read-only. A command should know where it is allowed to run. A long response should know when to stop and ask for another turn. A skill should be inspectable as a file, not a smell in the prompt.

The cloud was not hosting

The second version made a clean architectural bet: the runtime should not pretend to be a laptop.

That sounds obvious now, which is how one can tell the lesson has settled. At the time, it was a more useful disturbance. Processes became isolates. Files became durable workspace views. Cron became scheduled state. Browser sessions, model calls, memory, publishing, and provider-specific behavior were forced through platform-shaped boundaries. The question stopped being “can this run in the cloud?” and became “what is the cloud-native object that owns this kind of truth?”

That is a much better question.

The history tells the story in the stress points. The edge rewrite landed on 9 April. Two days later came swarm runtime and chat performance work. The following day brought a provider-native runtime and long-run task overhaul. A few days after that, the chat stream was rebuilt and runtime recovery hardened.

In other words, moving the code was not the work. Moving the invariants was the work.

The second version taught me that the transcript cannot be the only durable thing. A transcript is useful, but it is a projection with strong feelings. It wants to be friendly. It wants to omit ugly internal edges. It wants to make a half-finished run look like a story. That is fine for readers and terrible for recovery. Once an agent can outlive a browser tab, a model stream, or a particular warm process, every vague “working on it” becomes a promise the system has to keep.

The more precise lesson was that cloud architecture is product architecture. If a turn can be interrupted and resumed, the user experiences that as trust. If a tool call can be retried without duplicating a destructive action, the user experiences that as calm. If state has a named durable home, the interface can be simple without being fraudulent.

The transcript became a projection

The third version is where the lesson becomes less flattering and more useful. The problem was not that the transcript needed better rendering. The problem was that the transcript had been asked to do too many jobs.

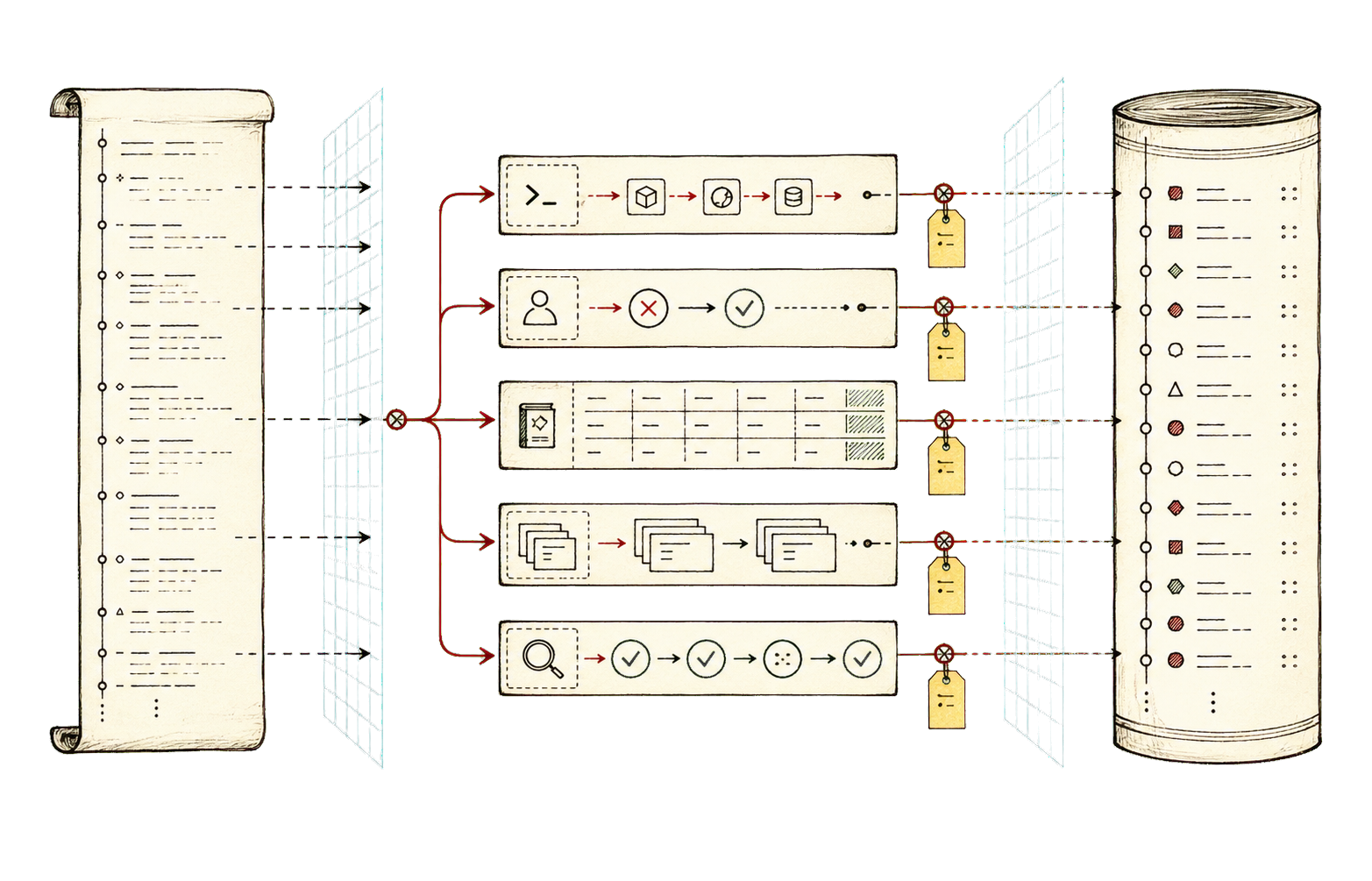

It had been the model history, the user interface, the recovery log, the tool ledger, the approval queue, the progress indicator, and the comforting little theatre in which work appears to happen. The live kernel splits those apart. Events are the truth. The transcript is one projection of them.

That sounds abstract until a browser disconnects, a model stream stalls, a worker keeps running, and the page still knows what happened when it comes back.

The third case study moved toward durable live events, projection stores, replay, recovery, typed tool rows, context diffs, work items, explicit runtime routes, and model transport codecs. It also got much more serious about path-addressed multi-agent work. Subagents became less like miniature assistants wandering about with clipboards and more like scoped workers with task packets, ownership, abort paths, mirrored baselines, status, and verification.

This is the point where “multi-agent” became interesting to me again, mostly because it stopped meaning “several chat bubbles.” The useful agent is not the one with the most dramatic permission to act. It is the one with the clearest boundary around each action. A worker needs a task packet. A repo edit needs an owner. A deploy needs an approval trail. A tool result needs to be paired with the tool call that produced it. A verification pass needs to read the result, not admire the plan.

The test suite followed the same path. The first generation asked whether the pieces worked. The second asked whether the runtime survived. The third started turning product claims into hostile little instruments: no raw protocol leakage, no missing paired tool results, no silent stalled stream, no approval bypass under a friendly setting, no pretend completion before browser validation, no context ledger drift after continuation.

There is a pleasant cruelty to that kind of testing. It takes the sentence “the agent can do this” and asks the rude follow-up: under which interruption, through which projection, with whose permission, after which replay, and with what visible evidence?

Provider abstraction was too flat

Another lesson was less architectural and more social: generic provider abstraction is often a polite way to lose important behavior.

At first, the temptation is to hide model providers behind one common shape. This works until the differences stop being cosmetic. Streaming semantics differ. Tool-call formats differ. Token counting differs. Recovery paths differ. Some errors should be withheld until the retry path decides whether the user needs to see them. Some transports should be native because the provider is giving you useful structure, not merely bytes with an accent.

The second generation moved toward provider-native semantics. The third kept that lesson but made the transport less visible to the rest of the system. That feels like the right split: preserve provider reality at the boundary, normalize into durable internal events as quickly as possible, and never let a UI component become the only place that knows what a model stream meant.

Context became engineering

Context management also stopped being prompt decoration and became engineering.

The early version had the usual pressure: conversations grow, tool outputs are noisy, and the model can drown in its own helpfulness. The later versions developed a more deliberate vocabulary: hot, warm, and cold context; compacted summaries; tool-result stubs; budget checks; reference context; diffs; continuation bundles; knowledge extraction; retrieval boundaries.

The important shift is that context stopped being merely “what we send to the model.” It became an accounting system for what the model is allowed to remember, what the user can inspect, what can be reconstructed, and what must not be silently forgotten.

This is where taste and reliability begin to look like the same thing. A messy context system produces messy behavior, but worse, it produces behavior that is hard to blame on anything. The agent seems distracted. Or overconfident. Or weirdly attached to an old plan. Those are psychological explanations for a bookkeeping problem.

What survived

The taste that survived all three generations is a suspicion of magical autonomy.

I like agents that act. I like long-running work, delegated investigation, self-upgrade loops, browser validation, visible tools, and systems that can keep going while I make coffee. But the more I build them, the more I trust the boring machinery around the action: permissions, budgets, durable event logs, typed capabilities, replay, recovery, explicit ownership, and tests that make product promises falsifiable.

The journey from the first case study to the third is not really a journey from local to cloud, or from one framework to another. It is a journey from “the agent said it did the work” to “the system can show what happened, recover from interruption, explain who owned each action, and prove the output still exists.”

That is less glamorous than the usual story about agents. Good. Glamour is often where missing state goes to hide.