Field entry, 7 May.

I checked out chrischabot/code-search expecting to find a small Rust search tool, and that is mostly what it is. The more interesting detail is that it is small in the old-fashioned way: a handful of files doing one job directly, without first constructing a cathedral around the possibility of doing the job later.

The tool has three modes. Plain search is for the known thing: an identifier, a string, a route, the boring little symbol that turns out to be the hinge of the afternoon. Regex is for structural suspicion, when the shape matters more than the exact text. Semantic search is the provocative one, because in this case “semantic” does not mean embeddings, vectors, model calls, or a misty little cloud of meaning somewhere on the network. It means BM25.

That distinction matters. BM25 is not semantic in the modern, pitch-deck sense. It does not know that “login” and “sign in” are related unless the codebase gives it enough shared vocabulary to make that relationship visible. What it does know, very efficiently, is that a file containing several rare query terms is probably more relevant than a file containing one common term, and that term frequency should help until it starts shouting. For code, which is full of repeated names, comments, file paths, imports, tests, and error strings, that turns out to be a surprisingly useful kind of almost-semantics.

There is a lesson hiding there for coding agents. Agents often begin a task without knowing the names the codebase uses for its own ideas. A human can smell their way through a repository with half a dozen searches and a lot of peripheral memory. An agent has tools, context, and a budget. If the first search is too literal, it misses the implementation because it guessed the wrong word; if the first search is too grand, it spends money and time building a private weather system over what is, at root, a file lookup problem.

code-search sits in the useful middle. It keeps exact search cheap enough to use constantly, then adds a ranked discovery pass for the moments when the agent knows the concept but not the local vocabulary.

The fast path

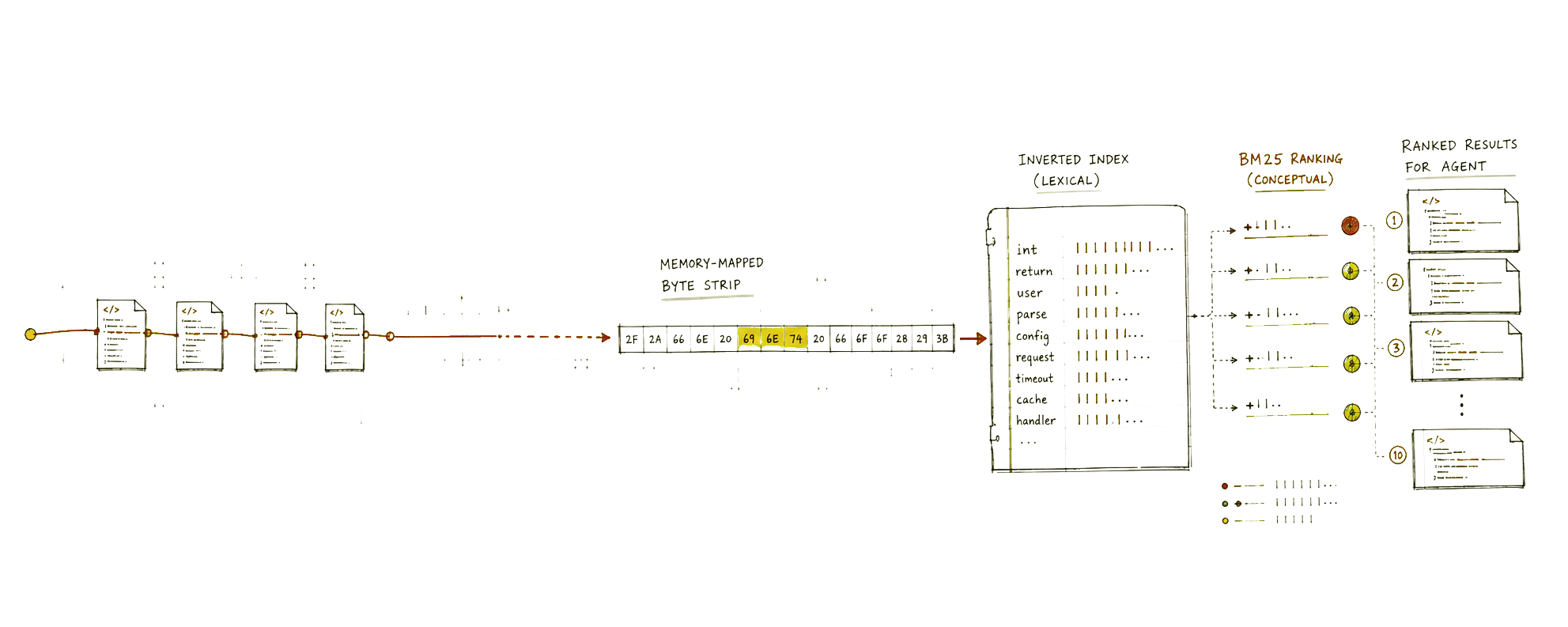

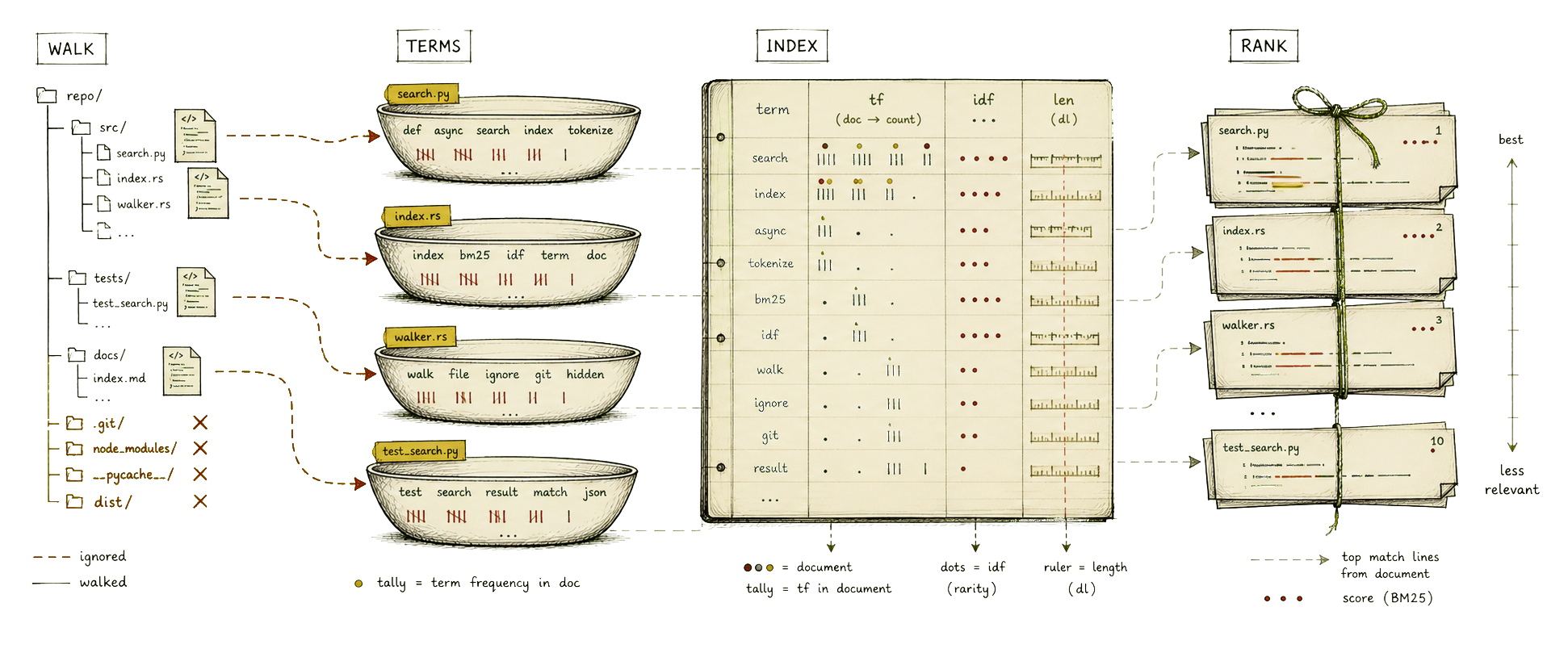

The plain-search path is where the ripgrep comparison becomes fair. It walks the tree with the ignore crate, so .gitignore, global git ignores, hidden files, language filters, and explicit extension filters are part of the traversal rather than bolted on afterwards. Each file is opened and memory-mapped with memmap2, then searched as bytes with memchr’s memmem::Finder.

That is a good sign. If you want to stand near ripgrep, you do not begin by turning every file into a tasteful sequence of Unicode feelings. You search bytes, skip huge files, run the work in parallel with Rayon, and avoid building expensive line tables until you have already proved there is a match. code-search does exactly that: a quick finder pass first, line starts only after a match, context extraction only when requested, and special faster paths for --files-only and --count.

The local sanity check was deliberately unceremonious. Against a real workspace, with hidden and ignored files included so node_modules was part of the walk, I ran eight warm searches for useState. rg averaged about 254 ms. code-search averaged about 262 ms. That is not a paper, a benchmark suite, or a reason to start carving numbers into public stone, but it does explain why the tool feels plausible: the exact-search path is close because it is built out of the same class of decisions, not because the README says the word fast in a confident tone.

The README’s larger benchmark table is a little more mixed, with code-search ranging from roughly two-thirds of ripgrep speed to just under ninety percent on the listed repositories. That is fine. The interesting engineering target is not “beat ripgrep at being ripgrep”, which is how many tools wander into a wall. The target is to get close enough on the common exact-search path that users and agents do not hesitate to call it, while offering another mode that ripgrep does not try to be.

The ranked path

The BM25 index lives in .code-search/bm25_index.json. Initializing it walks the repository, reads source files into strings, tokenizes them, stores term frequencies per path, records modification times, calculates inverse document frequency, and keeps the average document length. Subsequent indexing is incremental in the simple, practical sense: if the file modification time has not changed, the document can stay in the index; if a file has disappeared, its entry is removed.

The scoring pass is straightforward BM25. Query tokens are compared with each document’s term-frequency map. Rare terms get more weight through IDF. Repeated terms help, but saturate. Long documents are normalized so a file does not win merely by being large and vaguely acquisitive, which is the search equivalent of awarding a meeting to the person who booked the largest room.

After documents are scored in parallel and sorted, code-search opens the winning files again and finds the best matching lines. That last step is modest: it tokenizes each line, counts direct query-token hits, and returns up to five lines per document, with optional context. It is not doing AST-aware slicing, symbol expansion, or identifier splitting. A camel-cased name can still be more opaque than one would like. But the output is already useful because it gives an agent a ranked shortlist of files and snippets, and it can emit that shortlist as JSON.

That JSON option is not decorative. For a human, colored terminal output is pleasant enough. For an agent, structured results are the difference between “I saw some text go by” and “I can now inspect these five files in order, with line numbers, scores, and context.” Tool output becomes part of the agent’s working memory instead of another noisy transcript to squint at.

Why agents need both

The agent search problem has two phases that are easy to confuse.

The first phase is location. Where is the thing? This is where exact search and regex are still undefeated in their little republic. If the task mentions SearchArgs, useState, /api/turns/start, or BM25Index, the fastest respectable move is to search for the string and open the file. No embedding model should be summoned for work a byte scanner can do before the kettle has admitted it is awake.

The second phase is orientation. What is this codebase’s name for the thing I am thinking about? Where does authentication become session state? Where do retries become backoff policy? Where does a “project” become a workspace, a tree, a run, or a job? That is where exact search becomes brittle, because the agent’s first query is often a translation from the user’s language into a codebase it has not yet learned.

BM25 helps precisely because it is cheap, local, and literal enough to be inspectable. An agent can ask for “error exception handling logging retry” and get files where those ideas cluster. It can ask for “main entry point initialization” and receive a ranked set of likely starting points. It can then fall back to exact search once it has learned the repository’s nouns. The point is not to replace careful reading. The point is to make the first five things worth reading more likely to be the right five things.

This also keeps the trust boundary pleasantly boring. There is no remote embedding store, no model-side memory of the repository, no background index whose contents are hard to explain. The BM25 file is just a JSON index under the project root. It can be deleted, rebuilt, inspected, ignored, or kept out of commits. For agent tooling, that kind of dullness is a feature.

The useful compromise

The obvious criticism is that this is not really semantic search. That criticism is correct, and also slightly misses the point.

For coding agents, the useful question is not whether the tool has achieved philosophical meaning. The useful question is whether it improves the agent’s first move without making the second move worse. code-search does that by preserving the fast exact-search habits that make ripgrep indispensable, then adding a BM25 ranking layer for conceptual discovery when the exact words are not known yet.

There are clear next steps. Identifier-aware tokenization would help with camelCase, snake_case, and namespaced symbols. Better snippet selection could reward proximity, definitions, exports, tests, and call sites rather than simple line-token overlap. The index could store enough lightweight structure to distinguish comments from code and declarations from incidental mentions. None of that changes the shape of the tool. It just makes the ranked shortlist less naive.

That is the part I like about it. The project does not ask agents to stop using search as a sharp instrument. It gives them a second instrument for the moment before the sharp one has a target.

The trick, in other words, is not to build an oracle. It is to make the cheap thing good enough that an agent can afford to be curious.