Field entry, 7 May.

The small worker looked, at first, almost too plain to be interesting.

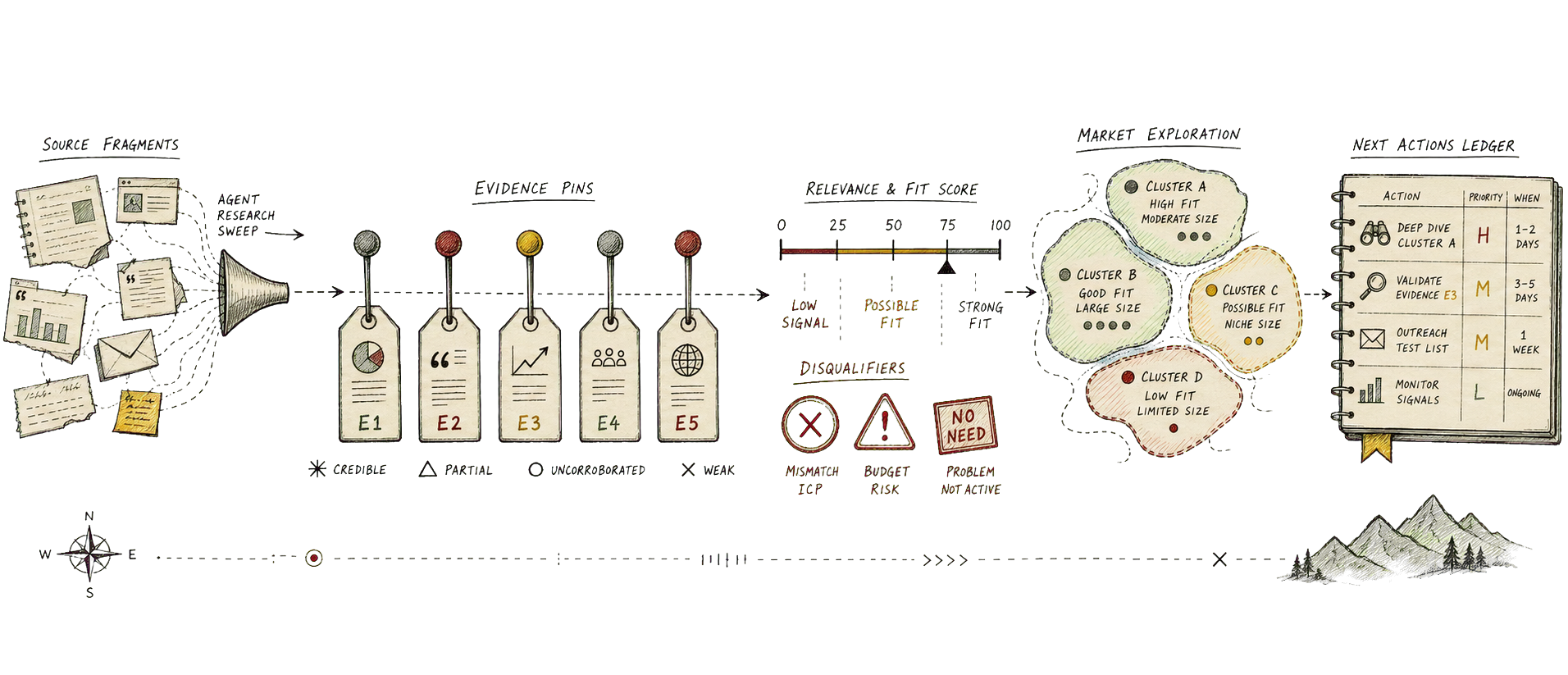

One endpoint accepted a target market, a product category, and a location. The response came back as JSON: a query echo, a generated timestamp, scoring weights, hard disqualifiers, and a ranked set of prospects. Each prospect carried the same specimen labels: name, cluster, tier, score, score breakdown, pain hypothesis, evidence, disqualifiers, outreach hook, and next action.

That is not a large application. It is barely furniture. But the interesting thing about it was the shape of the furniture, because the endpoint did not return a list of names with a thin mist of confidence sprayed over the top. It returned a small argument for each lead.

That distinction is the whole product.

Most lead generation systems begin by asking the wrong question. They ask, “who should we sell to?” and then immediately reward volume, because volume is the easiest thing to produce and the most flattering thing to screenshot. An agent can make this worse with impressive speed. Give it a market and it will assemble a list before the kettle has had time to develop an opinion, complete with plausible reasons, invented urgency, and that particular confident texture that says the sentence has been formatted more carefully than it has been earned.

The better question is more annoying and more useful: what would have to be true for this organisation to be a good lead, and what evidence do we have that it is true?

Start with a fit model

Before the agent searches, give it a scoring model. Not a mood. Not “high intent” as a decorative label. A model.

The version I checked out used six dimensions: local market presence, engineering intensity, adoption of the relevant technical category, regulated or security-sensitive need, scale urgency, and buyer accessibility. The exact weights are less important than the habit they enforce. A lead is not good because it sounds familiar. It is good because several independent signals point in the same direction and no hard disqualifier has already ended the conversation.

This is where agents are useful in the unglamorous way that matters. Ask one agent to propose the fit model from the product category and market. Ask another to attack it. Ask a third to translate the model into observable signals: hiring pages, technical writing, public architecture notes, compliance obligations, partner ecosystems, integration surfaces, funding or expansion signals, and the boring little breadcrumbs that often carry more truth than a brand sentence about innovation.

The result should be a rubric with maximum scores, evidence requirements, and disqualifiers. The disqualifiers matter more than they first appear. No relevant local presence. No product or engineering surface. No plausible buyer. Procurement path too slow for the motion. Technical constraints that make the product unusable. These are not pessimistic details. They are kindnesses. They stop the agent from polishing a lead that should have been allowed to leave quietly.

Search for evidence, not names

The agent should not start by building the final list. It should start by collecting candidate evidence.

A good search pass looks for clusters before it looks for winners. In a new market, the first useful output is not “top ten prospects”; it is a map of where fit might live. Regulated product teams may form one cluster. Infrastructure-heavy software teams may form another. Applied automation teams may form a third. Each cluster gets its own hypothesis about pain, urgency, and buying route.

Once the clusters exist, the agent can collect candidates inside them. The instruction is deliberately fussy: for each candidate, preserve the source trail, separate primary evidence from weaker third-party evidence, quote or summarize only what supports a specific scoring dimension, and mark uncertainty instead of sanding it down. A careers page may support engineering intensity. A security page may support regulated need. A public integration guide may support technical surface area. A vague article about transformation supports, at most, the ancient human capacity for writing a vague article.

The agent should also keep failed searches. That sounds like bookkeeping, but it is useful market intelligence. If a cluster produces many plausible candidates with weak evidence, the market may be noisy but shallow. If it produces few candidates with strong evidence, the market may be narrow but worth deliberate pursuit. If it produces evidence for need but no accessible buyer, the sales motion may be the problem rather than the market.

Score the lead honestly

Scoring is where the agent must become less charming.

For each candidate, ask it to fill the rubric dimension by dimension. It should show the score, the maximum, the evidence used, and the reason the evidence was enough or not enough. If a dimension has no evidence, it gets a low score. If the evidence is indirect, it gets a cautious score. If a hard disqualifier appears, the tier changes immediately, even if the rest of the row looks attractive in a spreadsheet.

This is the practical difference between ranking and decoration. A decorated list says, “this is a strong fit.” A scored list says, “this scored 82 because local presence, engineering intensity, and category adoption were strong; buyer accessibility is weak; the next action is to verify whether a platform or productivity leader owns a budget.” The second version is less smooth, which is precisely why it can be acted on.

The tier should be boring. A, B, monitor, disqualify. No mystical heat scores. No seven-stage funnel taxonomy with names that sound like a subscription analytics vendor trapped in a lift. The purpose is not to admire the classification system. The purpose is to decide what to do next.

Turn ranking into market exploration

The important move happens after the score.

A ranked lead list is useful, but it is still only a list. The more interesting output is the market map underneath it. Once the agent has scored enough candidates, ask it to aggregate what it learned: which clusters produced the highest scores, which dimensions repeatedly failed, which pain hypotheses appeared often, which buyer paths looked reachable, and where the evidence was surprisingly thin.

This turns lead research into exploration. You can ask whether the product category is strongest in regulated environments, developer tooling teams, integration-heavy platforms, or fast-growing automation groups. You can compare one city against another without pretending the same rubric means the same thing everywhere. You can discover that the obvious market has excellent need and terrible access, while the less glamorous one has enough pain, enough budget, and someone whose job title suggests they might actually open the email.

Agents are well suited to this because the work is repetitive, evidentiary, and slightly tedious. They can run the same rubric across many candidates without getting bored and quietly deciding that vibes are close enough. But they need a structure that makes boredom irrelevant. The source trail, scoring breakdown, hard disqualifiers, and next action are that structure.

The next action is especially important. A lead without a next action is not a lead. It is a museum label. The action can be a contact-mapping task, a validation question, a pilot hypothesis, a page to read, a procurement risk to check, or a reason to leave the candidate alone until the market changes. The point is that the agent should not merely say “good fit.” It should hand back the next piece of work.

Let agents divide the work

The clean pattern is a small team of agents with distinct jobs.

One agent designs the rubric from the product category and target market. One searches broadly and builds the candidate pool. One reads and extracts evidence into structured notes. One scores the candidates against the rubric. One reviews the scores for overclaiming, weak evidence, and disqualifiers that were politely ignored. A final agent writes the market brief: clusters, top leads, monitored leads, rejected leads, evidence gaps, and the recommended next exploration pass.

This sounds elaborate until you compare it with the usual alternative, which is one agent doing everything in a single warm bath of context. The problem with the warm bath is that early assumptions dissolve into later prose. A separate reviewer, given the rubric and the evidence but not the emotional journey, is much more likely to notice that “rapid growth” was inferred from a hiring page last updated during an earlier geological period.

The implementation I inspected was intentionally deterministic. Same query shape, same scoring weights, same ordered output, same validation pass. That is a sensible place to start, because repeatability gives the human operator something to argue with. Once the workflow is stable, live search can feed it, the evidence store can grow, and the market map can become more current. But the first version should prove the loop before it performs the spectacle.

Field note

The lesson is not that agents can produce lead lists. They can, and so can a spreadsheet with enough caffeine nearby. The useful version is narrower and better: agents can turn lead generation into an inspectable research loop, where every prospect carries evidence, every score can be challenged, every disqualifier has teeth, and the ranked list becomes a way to understand the market rather than a pile of names waiting to disappoint someone.