It started, as these things often do, with something that should have been boring: one service needed to ask another service to do a thing.

The problem was not the request itself. The problem was that every service had developed a slightly different private religion about what a request was, where identity lived, whether a thread was continuity or just metadata, and how you could tell, three hops later, which innocent-looking call had eaten nine seconds and then died in a room nobody owned.

At a certain size, “just call the other service” stops being engineering advice and becomes a small act of vandalism.

The platform needed a unifying protocol, not because centralization is noble, but because a service network without a shared language becomes folklore. Each boundary grows its own error shape, auth story, event vocabulary, retry habit, and observability garnish. Then one day the user sees a spinner, the trace has three unrelated names for the same work, and everyone becomes a detective in a city with no street signs.

Naming the nouns

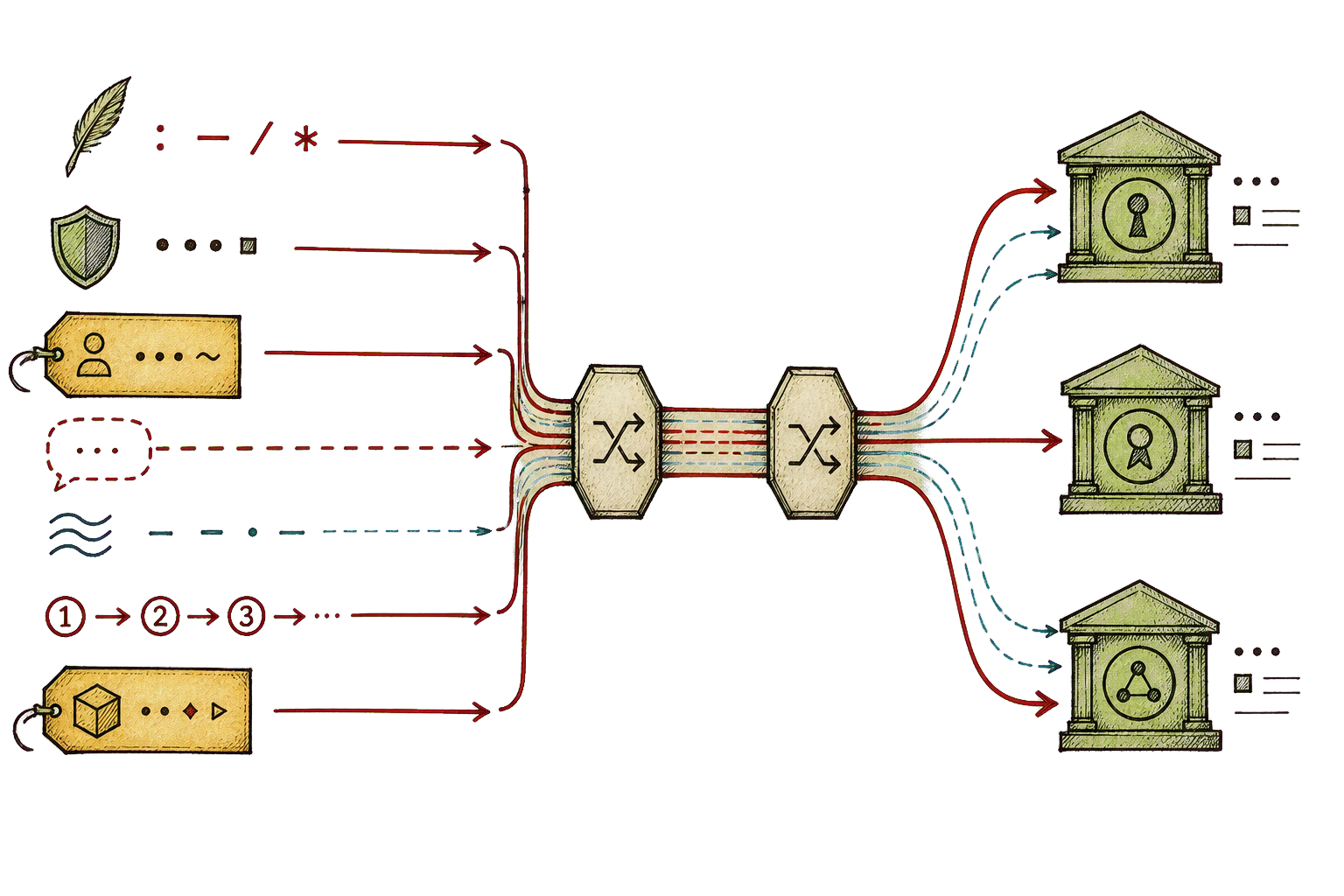

The useful move was to stop treating the platform as a diagram of boxes and start treating it as a language.

A thread is continuity. A turn is one execution-bearing exchange inside that continuity. A run is the work admitted behind the turn. Items, blocks, outputs, and deliveries are the public trail the work leaves behind. Resources are durable configuration and administrative state. Workspaces own files, blobs, checkpoints, and sync. Capabilities describe the actions a caller may discover and invoke. Streams carry state changes as ordered envelopes.

Once those nouns are stable, the method names become almost disappointingly plain:

conversation/thread.create

conversation/turn.start

runtime/run.start

workspace/checkpoint.create

sync/barrier.await

resource/get

capability/invokeThe dullness is doing work. It means the call can move over HTTP, WebSocket, server-sent events, stdio, or a later binding without changing what the sentence means. The transport may differ. The grammar survives.

This is the heart of the design: unification without pretending every service is the same service.

Identity belongs in fields

The compact debugging shape that emerged is:

service.action + user_id + optional thread_idThat sentence hides quite a lot of discipline.

The operation name should be stable and boring: service.action, or in the protocol grammar, profile/object.action. Identity does not belong inside that name. The user id is a field. The thread id is a field, and it is optional because not every operation is thread-bound. When execution exists, turn and run ids can join the party. Workspace, resource, capability, and subscription ids can appear when they are the relevant selectors.

This sounds like paperwork until the first time a request crosses a public edge, enters an adapter, starts a turn, calls a runtime, asks an inference authority for tokens, reads workspace state, and then has the audacity to fail. At that point the paperwork becomes the map.

The auth model follows the same line. A service authenticates as itself, but it may also carry delegated caller context: tenant, optional user, scopes, claims, and the selectors needed to prove it is allowed to touch the thing it is touching. That split matters because many calls are both service-to-service and user-scoped. Treating those as one identity is how trust assumptions sneak into the walls.

Streams are the public trail

Request/response is not enough for agent systems.

The work unfolds. It asks questions. It requests approvals. It emits partial output. It starts tools, receives results, reports usage, creates checkpoints, updates projections, and sometimes needs to be replayed after the viewer reconnects. If every service invents its own event format, the interface becomes a collage of special cases.

So the stream envelope matters. It carries a target, a projection, a sequence, a timestamp, an event type, a payload, and optionally trace context. That gives clients and adapters a stable way to subscribe, replay, resume, and rebuild state without knowing which internal authority produced the event.

The key distinction is that a projection is not the source of truth. A transcript is a projection. A task view is a projection. An external protocol session is a projection. They are allowed to be useful and shaped for their clients, but they are not allowed to found a second government.

Capabilities without soup

Capabilities are where the design could easily have turned into soup.

A capability can be a tool, a resource, a prompt, an automation, a workflow, or a custom operation. It can be synchronous, streaming, or asynchronous. It can be backed by a built-in handler, a connector, an external protocol, or another internal authority. The trick is not to pretend those are the same implementation. The trick is to give them the same invitation card: here is the capability, here are its operations, here are its schemas, here is its effect scope, here is the policy, here is the context you may carry when invoking it.

That makes capability transport useful internally and at the edges. A Model Context Protocol surface can expose tools, prompts, resources, and notifications while translating inward to capability, resource, workspace, conversation, presentation, and stream profiles. An Agent Client Protocol edge can keep its own session shape while mapping real work back to threads and turns. An Agent-to-Agent edge can keep task and context ids while projecting canonical runs and outputs.

The external protocols are therefore satellites, not capitals. They can keep their sessions, task ids, resume cursors, and native envelopes, because clients on those protocols quite reasonably expect them. But once a real piece of work exists, the canonical state is still a thread, a turn, a run, a stream, and a projection.

The adapter’s job is translation and continuity, not founding a second architecture.

Observability is the proof

The observability story is the reason this architecture is more than taste.

A stable operation name plus a user id plus an optional thread id is enough to turn a pile of spans into a narrative. You can ask where the request entered, which authority owned the slow boundary, which span was on the critical path, and whether the apparent frontend failure was really a replay gap, a runtime delay, an inference timeout, a workspace lock, or a connector permission problem.

OpenTelemetry works best here when the protocol gives it real nouns. Trace id, span id, service name, operation name, user id, thread id, run id, turn id, capability id, workspace id. These are not decorative attributes. They are the difference between “latency happened” and “this adapter spent 1.3 seconds waiting for the workspace authority after a replayed turn tried to fetch a checkpoint.”

The local visualizer work made this especially concrete. A debug tap can synthesize stream spans for long-lived event flows, correlate logs with traces, and show one request as a cross-service waterfall. That only works when the services agree on the shape of the story they are telling. Otherwise the visualization becomes a pretty way to confirm that nobody speaks the same language.

Stability through grammar

The surprising part of protocol work is how much stability comes from names.

Not clever names. Plain names. Names that survive transport changes, runtime rewrites, adapter additions, and SDK differences. Names that can appear in TypeScript, Go, Rust, Swift, JSON-RPC, WebSocket, SSE, stdio, or an internal binary binding without acquiring a new personality each time.

This does not mean the protocol is finished or pure. Some profiles are deeper than others. Some bindings are more mature than others. Some older wire shapes still carry historical casing. Some adapters have broader helper layers because the outside protocol expects them. That is fine. A useful protocol is not a museum object. It is a common language under pressure.

The important rule is simple: if a concern is service-specific, keep it in that service; if it is edge-specific, keep it in the adapter; if it is protocol-wide and transport-agnostic, add it to the shared language.

That rule prevents two opposite failures. It prevents the protocol from becoming a dumping ground for every private implementation detail. It also prevents every service from inventing its own vocabulary for the same public act.

The real point

The point of a unifying protocol is not that everything goes through one place. That is centralization, and it has its own failure modes, mostly involving heroic bottlenecks and meetings with architectural diagrams in them.

The point is that when work goes through many places, it still leaves a readable trail.

That is why the service.action + user_id + optional thread_id shape matters. That is why capabilities need descriptors instead of vibes. That is why streams need ordered envelopes. That is why adapters should translate rather than own truth. That is why observability belongs in the protocol from the beginning, not as a tasteful garnish added after the first outage.

A good protocol does not make a distributed system simple. It makes the complexity legible enough that people can act on it.

That is more modest than magic, which is one of the reasons I trust it.