ON ai

Taste Is Becoming Infrastructure.

What AI design systems, testing skills, and model prompting notes reveal about the strange new job of turning judgement into reusable machinery.

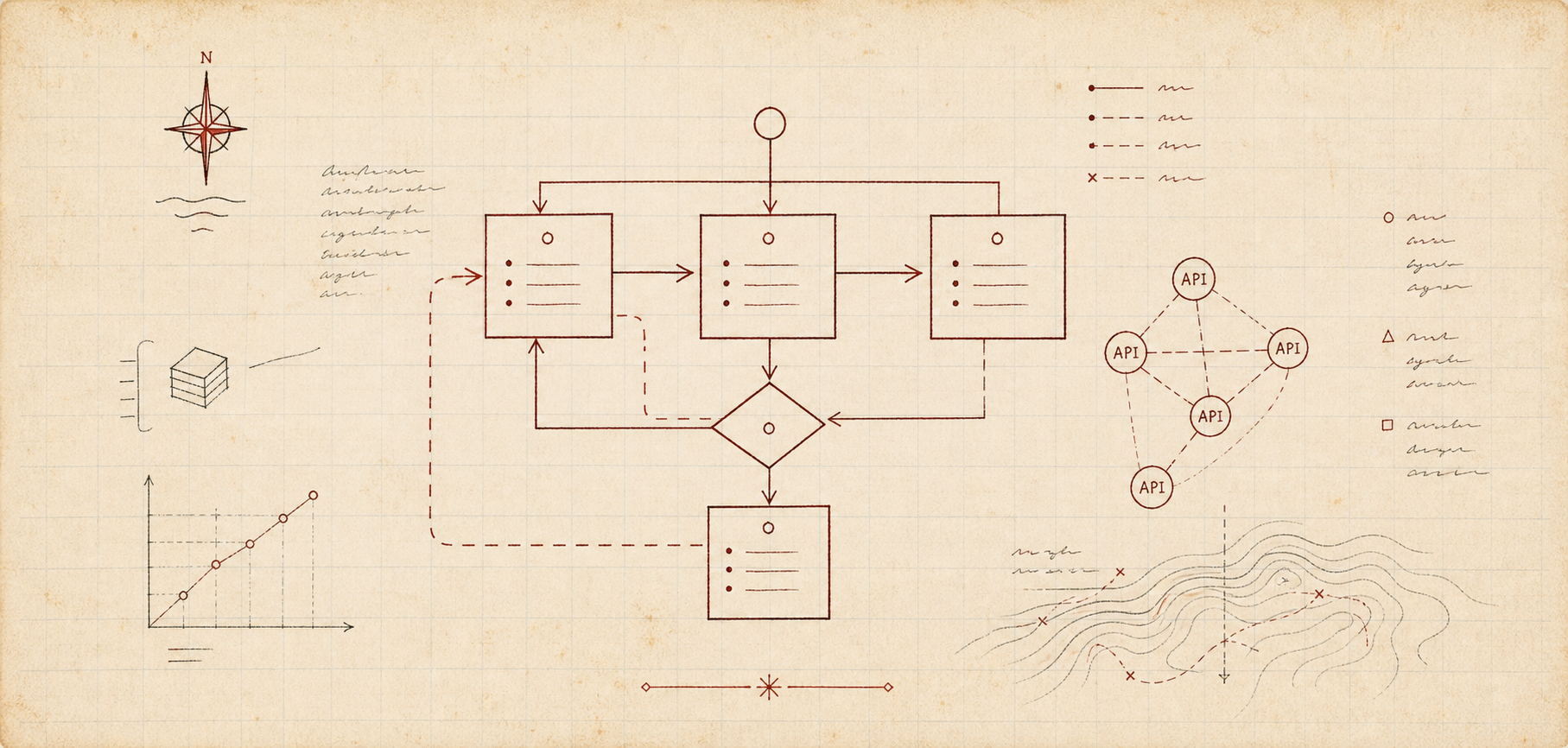

There is a particular kind of artefact that appears when a craft begins to industrialise. It is not the finished object, and it is not quite the tool. It is the rulebook, the workshop jig, the annotated checklist, the laminated card near the machine with three warnings that are only funny because someone once ignored all of them.

AI work is producing these artefacts everywhere now. Not just prompts, which were the first crude version, but skills, design systems, evaluation harnesses, reference repos, memory stores, and little documents that say, in effect: when the agent is about to be clever, please make it be useful instead.

The clearest specimen in my reading list was Kami, a document design system for AI agents. The page says v1.1.0, 2026.04; the GitHub repo was created on April 20, 2026, and was still being actively touched on May 4. Its opening line is almost too neat: “Good content deserves good paper.” Underneath that is a highly specific system of constraints: parchment instead of pure white, ink blue as the only accent, warm neutrals, serif-led hierarchy, controlled shadows, fixed line-height bands, solid hex tag colours to avoid a WeasyPrint rendering bug.

The WeasyPrint detail is the tell. Without it, Kami could be mistaken for moodboard prose. With it, the system becomes something else: taste that has survived contact with an exporter. The rule is not “make it elegant”, because that sentence has no operational content. The rule is “do not use rgba for tags because the renderer creates double rectangles.” That is judgement hardened into an instruction an agent can obey.

This is a quieter form of software than the AI demos usually reward, but it may be the one that matters. If agents are going to produce more of the work, then a great deal of human taste has to move upstream. It has to stop living only in the final pass, where a person sighs at the result and adjusts the spacing by hand, and start living in reusable constraints: fonts, colour limits, examples, anti-patterns, export checks, and the kind of small practical advice that never appears in a brand deck because it sounds insufficiently inspired.

The same movement is visible in adewale/testing-best-practices, a Claude Code skill created on April 11, 2026. It is not simply “write better tests”, because that would be another decorative wish. It loads language-specific guidance, pushes property-based testing when appropriate, looks for skipped tests and log-instead-of-assert behaviour, distinguishes test tiers, and asks the agent to assess assertion density and mock fidelity. It is testing taste packaged as an intervention.

There is, of course, something faintly absurd about teaching an agent to be suspicious of its own tests. But the absurdity is the point. A model can generate a large number of plausible tests very quickly, and plausible tests are one of software’s more treacherous substances. They look responsible. They make CI turn green. They reassure everyone until the first real bug walks through them with its shoes on.

So the lesson is not that agents need more tests. The lesson is that agents need better inherited suspicion. They need to know that a test with no meaningful assertion is paperwork, that an integration test made entirely of mocks is theatre, and that a suite which cannot fail for the right reason is not a safety net so much as a decorative hammock.

Amp’s Opus 4.7 note belongs in the same drawer. Its practical advice is not to script every move for the model, but to define success clearly: no public API changes, no database changes, the relevant tests pass, the typechecker passes. This is a subtle but important distinction. Steps are a way of lending the model a pair of hands; success criteria are a way of lending it judgement.

The more capable the model becomes, the more valuable this distinction gets. A weak model needs instructions because it cannot infer enough. A strong model needs constraints because it can infer too much. It can solve a neighbouring problem with impressive fluency, polish the wrong surface, or refactor a piece of code that was meant to be left alone. The craft is no longer just prompting the model forward; it is drawing the boundary within which forward motion counts as progress.

This is where Kami, testing skills, and model-operation notes begin to rhyme. They all take something that used to live tacitly in a senior person’s head and make it legible to a machine. Not perfectly, and not finally, but usefully. The designer’s restraint becomes a palette and a shadow rule. The test engineer’s scepticism becomes an anti-pattern catalogue. The staff engineer’s sense of “done” becomes a list of invariants the agent must preserve.

There is a temptation to describe this as automation replacing taste, but that is too neat and mostly wrong. The more precise version is that automation increases the return on taste that can be expressed as structure. Vague taste still evaporates at the edge of the model. Operational taste compounds.

This also explains why some AI-generated work feels so strangely bad even when it is competent. It has capability without discipline. The sentences parse, the UI renders, the tests run, the document exports, but nothing in the system has told the agent what kind of thing it is making or which forms of success are cheap impostors. The result is not failure in the old sense. It is abundance without standards, which is more exhausting because it arrives wearing the costume of productivity.

The interesting builders are responding by making standards portable. They are not just producing outputs; they are producing taste carriers. Skills. Style guides. Reference implementations. Evaluation fixtures. Memory documents. Little systems that can be handed to an agent before the work begins, so that the first draft is not merely fluent but pointed in the right direction.

In an earlier software era, infrastructure meant servers, queues, databases, and deployment scripts. Those still matter, but AI work adds a stranger layer: the infrastructure of preference. What should a good document feel like. What should a reliable test prove. What should an agent leave alone. What should it ask before changing. What counts as done.

The funny thing is that this makes the human role both more abstract and more concrete. More abstract, because the work shifts from making each object to shaping the system that makes objects. More concrete, because hand-wavy standards no longer survive. The agent will do exactly what the rule permits, and the rule will reveal whether the taste behind it was real.

Taste, in other words, is becoming infrastructure. Not because machines have acquired it, but because we have started writing down the parts of it that were always engineering.